Artificial Intelligence

Investing in AI Hardware: From CPUs to XPUs

Securities.io maintains rigorous editorial standards and may receive compensation from reviewed links. We are not a registered investment adviser and this is not investment advice. Please view our affiliate disclosure.

Investing in AI Hardware: Picks and Shovels Approach

AI is promising to be the most important change in our economy, productive systems, and society in the past few decades, potentially making even the radical changes brought by the Internet trivial in comparison.

It might make an entire category of jobs disappear, including drivers, translators, customer support, web designers, etc. Other jobs might see a radical reduction in demand, like programmers, entry-level lawyers, diagnosticians, etc.

It should also create a lot of additional value and productivity for many other tasks, with the dominant AI software companies likely the first ones to reach market capitalizations previously unimaginable.

For all these reasons, capital markets and investors have been mesmerized by AI and pay a great deal of attention to the progress of the many tech giants in AI, as well as the strong competition emerging from Chinese tech giants like Alibaba and startups like DeepSeek.

Another way to play the AI boom is to follow the strategy known to work in every gold rush: don’t look for gold, but sell the picks and shovels. This has certainly worked for the companies that happened to be in the best spot to sell AI-optimized hardware, with Nvidia (NVDA +5.59%) having turned its gaming-graphic cards into AI-training chips, making it the world’s most valuable company in the world, having passed the astonishing $4T market cap (follow the link for a full report on Nvidia).

Because AI requires very specific hardware, mostly different from other previous forms of computing tasks, and is such a massive business opportunity, the semiconductor industry is now in a race to develop new forms of hardware designed specifically for training and running AI programs.

While Nvidia is likely to stay one of the top companies in the sector, alternatives are now emerging and could provide interesting opportunities for investors who pay attention early.

Why AI Needs Specialized Hardware

Many Small Calculations

Initial efforts in AI used the same computing capacity as other programs, focusing mostly on processors (Central Processing Unit – CPUs). CPUs are still important, but it quickly appeared that they are not optimal for most of the methods used currently to develop AIs.

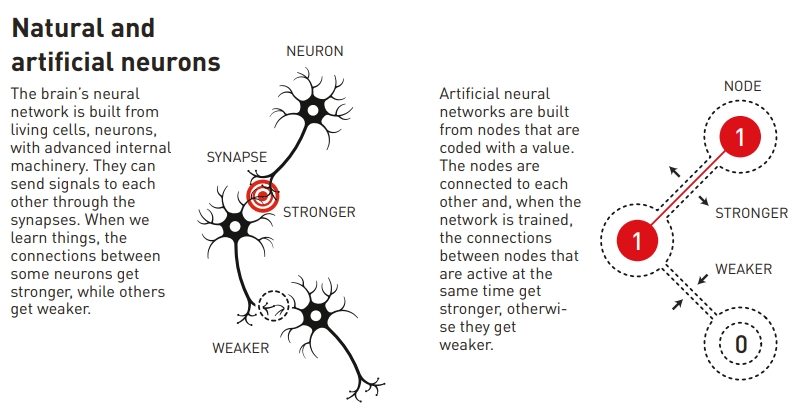

Neural networks and other similar methods require a lot of relatively simple calculations, instead of one very complex calculation. So many smaller chips working in parallel is generally better than on massive and powerful CPUs.

This is in large part why GPUs quickly became more popular, as graphic cards are inherently designed to perform thousands of smaller calculations in parallel.

Today’s AI training is largely based on neural networks, a concept that won the Nobel Prize in Physics in 2024, a reward we covered in detail in a dedicated article at the time.

Source: Nobel Prize

A second revolution in AI technology came with “transformers”. They solve traditional neural networks’ inability to efficiently process long sequences of data, a common characteristic of any natural language.

First introduced in 2017 by Google researchers, it is the root cause of the current explosion in AI capacity. Transformers are at the core of AI products like LLMs (Large Language Models), including ChatGPT.

Different Requirements

One important distinction in AI workflows is the difference between fine-tuning and inference, both of which have distinct hardware requirements.

- Fine-tuning involves training a model on domain-specific data, requiring significant computing power and memory. It is a very technical task, often at the very edge of AI science.

- Inference focuses on using an already-trained model to generate outputs, demanding less computational power but a higher focus on low-latency and cost-efficiency.

- This is more routinely done by AI experts deploying preexisting models to solve real-life problems.

So, while costs are obviously a concern for both fine-tuning/training and inference/use of AI, training will often require the best hardware possible, while usage tasks will focus more on the cost of hardware and energy consumption when picking the best hardware option.

CPUs Vs GPUs

Central Processing Units (CPUs):

CPUs are general-purpose and not specifically AI hardware. They are, however, still essential for executing instructions and performing basic computations in AI systems.

Most of the software handling the interface with the final users of an AI system will also be CPU-centric, be it individual computers or cloud-based software.

Source: AnandTech

CPUs can also be used for very simple AIs, where dedicated hardware is not really required. This is especially true when the output is not especially urgent, and the relatively slower AI-processing of CPUs is not an issue.

So small models with small batches of data and calculation can perform well on CPUs. The omnipresence of CPUs in regular computers also makes it a good option for an average user not willing to invest in AI-specific hardware.

CPUs are also very reliable and stable, making them a good choice for critical tasks where no error is an important criterion.

Lastly, CPUs are useful for some of the tasks in AI training, generally in collaboration with other types of hardware, like data loading, formatting, filtering, and visualization.

Graphics Processing Units (GPUs):

Originally designed for graphics rendering, GPUs are designed for parallel processing, making them ideal for training AI models that require handling large datasets. Switching from CPUs to GPUs has reduced training times from weeks to hours.

Due to their widespread availability and the experience of IT specialists to work with them, GPUs were the first type of computing hardware to be installed in series to scale up AI research.

Source: Aorus

Also instrumental in GPUs’ success was the development of CUDA by Nvidia, a general-purpose programming interface for NVIDIA’s GPUs, opening the door for other uses than gaming. This was done because some researchers were already using GPUs to perform calculations instead of the usual supercomputers.

“Researchers realized that by buying this gaming card called GeForce, you add it to your computer, you essentially have a personal supercomputer.

Molecular dynamics, seismic processing, CT reconstruction, image processing—a whole bunch of different things.”

Today, GPUs are still among the most sought-after types of AI hardware, with Nvidia barely managing to produce enough to satisfy the demand of tech giants building gigawatt-scale AI data centers.

It is also the beginning of the “super GPU era”, with the recent release by Nvidia of the GB200 NVL72.

This hardware is designed to act as a single massive GPU straight out of the factory, instead of having to network many small ones. It makes it a lot more powerful than even the previously record-breaking H100 model.

Source: Nvidia

This should also be a lot more energy efficient, a crucial point as the AI industry might fall short on energy before being short on chips at the speed at which AI data centers are being built. And more computing & energy efficiency mean less waste heat, which temporarily solves the overheating issue as well.

| Hardware Type | Best Use Case | Speed | Energy Efficiency | Flexibility |

|---|---|---|---|---|

| CPU | General-purpose tasks | Low | High | Very High |

| GPU | AI training & parallel tasks | High | Medium | Medium |

| TPU | Tensor operations & transformers | Very High | High | Low |

| ASIC | Single task acceleration | Very High | Very High | Very Low |

| FPGA | Reconfigurable AI workloads | Medium | Medium | High |

The Rise of ASICs and AI Hardware

Application-Specific Integrated Circuits (ASICs) are computing hardware designed specifically for a given computing task, making them even more specialized than still relatively generalist GPUs.

So they are less flexible and programmable than general-purpose hardware.

As a rule, they tend to be more complex. They are also generally more costly, both due to a lack of economies of scale for their production and the cost of custom designs.

They are, however, much more efficient at their given task, normally produce an output quicker with a lot less wasted computing power and energy.

ASICs and other AI-specific hardware are rising in utilization, as the field is progressively noticing that some calculations are not ideally done on GPUs but require more specialized equipment.

Tensor Processing Units (TPUs)

TPUs were developed by Google (GOOGL +5.14%) specifically for performing tensor calculations (linked to transformer-based calculus). They are optimized for high-throughput, low-precision arithmetic.

Source: C#Corner

This gives TPUs high performance, efficiency, and scalability for the training of large neural networks.

TPUs possess specialized features, such as the matrix multiply unit (MXU) and proprietary interconnect topology, that make them ideal for accelerating AI training and inference.

TPUs power Gemini, and all of Google’s AI-powered applications like Search, Photos, and Maps, serving over 1 billion users.

This hardware type can significantly speed up the development and workings of neural networks, where the occasional error is less significant, as these models are highly reliant on statistics and a large number of calculations to begin with.

Among the end-user tasks the most fit for TPUs are deep learning, speech recognition, and image classification.

Neural Network Processors (NNPs):

Also linked to Neural Processing Units (NPUs) and called neuromorphic chips, NPPs are specialized in neural network computation, designed to mimic the neural connections in the human brain. They are also sometimes called an AI accelerator, although this term is less well-defined.

An NPU will also integrate storage and computation through synaptic weights. So it can adjust or “learn” over time, leading to improved operational efficiency.

An NPU includes specific modules for multiplication and addition, activation functions, 2D data operations, and decompression.

The specialized multiplication and addition module is used to perform operations relevant to the processing of neural network applications, such as calculating matrix multiplication and addition, convolution, dot product, and other functions.

The specialization can help an NPU complete an operation with just one calculation instead of several thousands with a generalist hardware. For example, IBM claims that NPU can radically improve the efficiency of AI calculation compared to GPUs.

“Testing has shown some NPU performance to be over 100 times better than a comparable GPU, with the same power consumption. ”

Because of this energy efficiency, NPUs are popular with manufacturers to install in user devices, where they can help perform locally tasks for generative AI apps, an example of “edge computing”. (see below for more on that topic).

Many methods are currently being explored in how to create neuromorphic chips:

- Leverage incipient ferroelectricity, a still poorly understood phenomenon.

- Active substrate using vanadium or titanium.

- Using memristors, a new type of electronic component, which can perform AI tasks at 1/800thof the normal power consumption.

Auxiliary Processing Unit (XPUs)

XPU merges together CPU (processor), GPU (graphics card / parallel processors), and memory into the same electronic device.

Source: Broadcom

XPUs is a broad term, encompassing many variations of this concept of bringing all the hardware into self-contained units, including Data Processing Units (DPUs), Infrastructure Processing Units (IPUs), and Function Accelerator Cards (FACs).

XPUs are seen as solving a growing problem of AI data centers, which is the growing need for connectivity between the sub-units, to the point where data lag becomes an important factor in slowing down computing, more than the available computing power.

Essentially, the chips (GPUs, TPUs, NPPs, etc.) are waiting on the data as much as they are actually working.

A leader of this technology is Broadcom (AVGO +5.49%), which we discussed in detail in a dedicated investment report.

Field-Programmable Gate Arrays (FPGAs):

FPGAs are programmable processors, making them significantly more flexible and reconfigurable than the more rigid ASICs. FPGAs can be customized for specific AI algorithms, potentially offering higher performance and energy efficiency.

Source: Microcontrollers Labs

The flexibility comes at a cost, as FPGAs are generally more complex, expensive, and consume more electricity. They can however still be more efficient than generalist hardware.

This makes them somewhat a niche product, where their flexibility compensates for the disadvantages. For example, machine learning, computer vision, and natural language processing can benefit from FPGAs’ versatility.

High Bandwidth Memory (HBM):

The most important developments in custom AI-centric hardware have been in the field of computing power, for a long time the chokepoint in building more computing capacity to train new AIs.

Still, these systems need high-efficiency support systems as well, of which memory is an important one. HBM provides, as its name indicates, higher bandwidth than traditional DRAM.

It is achieved by stacking multiple DRAM dies vertically and connecting them with through-silicon vias (TSVs). The first generation of HBM was developed in 2013.

The vertical stacking saves space and reduces the physical distance the data needs to travel, speeding up the transfer of data, a must in AI computing.

HBMs are more complex to manufacture and expensive than DRAM, but the performance and power efficiency benefits often justify the higher cost for AI applications.

AI Data Center Infrastructure: Power, Cooling & Connectivity

Besides the memory and computing power, the auxiliary systems of AI data centers are also important. Without them, the data cannot circulate fast enough, the chips would overheat, or the power available would be insufficient.

This means that, for example, Broadcom connectivity hardware also benefits greatly from AI data center buildup, as so do specialized solutions like cooling equipment suppliers, for example, Vertiv (VRT +6.98%) or Schneider Electric (SU.PA).

Power supply might also become an issue, and several tech giants are trying to tackle the issue by betting on nuclear energy, with the first move by Microsoft in 2024, followed by many others since.

Combined with a commitment to lower carbon footprint of AI by tech companies, this should greatly benefit companies in the nuclear or renewable sector energy, like Cameco (CCJ +5.61%), GE Vernova (GEV +6.8%), First Solar (FSLR +6.8%), NextEra (NEE +0.9%), or Brookfield Energy Partners (BEP +2.8%) (follow the links for a report on each company).

Emerging AI Computing Technologies

Quantum Computing

Because AI is so hungry for computing power, it is possible that the future of the field’s hardware is not even with the currently available silicon solutions.

One possibility is that quantum computing could be used to detect patterns much more efficiently than classical computing ever could, something already explored by researchers.

Quantum computing as a whole could be used for solving some specific calculations that are almost impossible with binary computing. This likely will ultimately be applied to AI, but the first commercial quantum computers are still a few years away, and a large quantum network even further away.

Photonics

Using light instead of electrons to carry data, photonics could be much quicker than electronic devices.

Because quantum computers usually carry quantum data with entangled photons, there is also a lot of overlap between quantum computing and photonics, and the first dual quantum-photonic chip has already been announced.

Organoids

As most AI replicates in computers the functioning of the brain’s neural networks, some researchers are wondering if we could not instead use … actual brain cells.

This is an intriguing idea, especially as some research could indicate that the brain is actually an organic quantum computer.

This type of “computer” is called organoids, and essentially consists of neurons grown in a lab on a computer chip. The neurons then self-organize their dendrites and connections in response to the chip stimulus.

This technology is still new and relies on bio-3D printing.

Others

We explored other alternatives to silicon computing in “Top 10 Non-Silicon Computing Companies”, such as vanadium dioxide, graphene, redox gating, or organic materials.

Each promises to either be much quicker or much less energy-intensive than classical silicon-based computing. They are, however, still relatively new and unlikely to revolutionize the field of AI at commercial scale, at least for the next 5-10 years.

Cloud AI and Edge AI: Accessibility Trends

Cloud AI

As the most powerful AI systems are made by large tech companies, they are mostly accessible through the cloud. The same is becoming true for access to AI-specialized hardware itself.

The leader of this trend is Coreweave (CRCW -2.5%), a company that moved from cloud provider to cryptocurrency mining using GPUs, to today providing on-demand AI compute.

This made CoreWeave a key partner of upcoming AI startups trying to compete with the tech giants, like Inflection AI and its $1.3B GPU cluster, funded by a fresh funding round.

“Two months ago, a company may not have existed, and now they may have $500 million of venture capital funding.

And the most important thing for them to do is secure access to compute; they can’t launch their product or launch their business until they have it,”

As the pure player in AI hardware becomes wary of big tech producing their own GPUs, TPUs, XPUs, etc. and evolving from clients to competitors, it is likely that companies like CoreWeave will get priority access to the latest hardware release by Nvidia and others.

This business model will likely be especially important for AI training, which is a lot more demanding in computing capacity than just using the already trained AIs.

Edge Computing & AI PCs

Another case of AI computing that is quickly evolving is the need to have the computing of AI systems done on-site, as close as possible to real-life situations.

This is a must for systems that might not tolerate being unplugged from AI if the connection fails, or when the latency of back and forth with the cloud is too slow.

A good example is self-driving cars, which are expected to perform the understanding of their environment offline.

This type of calculation is called edge computing, and benefits greatly from more efficient and less power-hungry hardware.

It can increase AI reliability, and as models become more efficient, illustrated by the leap forward of DeepSeek, it might become a more prevalent model of AI deployment in the future.

For the same reason, AI PCs like the one recently launched by Nvidia, might in the long run be enough to run many AI applications locally, increasing privacy and security compared to always connected to the cloud AIs.

Conclusion

AI hardware has, for a while, been somewhat synonymous with GPUs, as graphics cards were a lot more efficient at AI training than other types of hardware like CPUs. This made the fortune of Nvidia and of many of its early shareholders.

GPUs, especially AI-focused “super GPUs”, are likely going to stay important in the building of AI data centers. But they are going to evolve into just one of the components of increasingly complex and specialized systems.

Transformer operations will be sent to TPUs, neural networks tasked to NPP, repeating tasks to dedicated ASICs or reconfigured FPGAs.

Meanwhile, high-bandwidth memory, advanced telecommunication connectors, and ultra-efficient cooling will keep all the auxiliary functions around the computing core running.

For edge computing and smaller AIs than the massive LLMs, local computing, maybe powered by all-in-one XPUs, will likely be used by scientists, self-driving cars, and users concerned with privacy or censorship, potentially with open-source AI models.

What is sure is that the profits from selling the “picks and shovels” of AI hardware in the AI gold rush are far from over.

After a period of domination by Nvidia, investors might want to diversify risks by spreading their IA hardware portfolio to other designs, and maybe even the power utilities companies that will provide the precious gigawatts to run the increasingly large and numerous AI data centers in the world.