Computing

Quantum Computing Achieves Unconditional Exponential Speedup

What was previously expressed only on paper has now been demonstrated in action. The promise of quantum computing has been achieved in reality, as they beat classical computers exponentially and unconditionally1.

For this, a team of researchers, led by Daniel Lidar, a professor of Electrical & Computing Engineering at the USC Viterbi School of Engineering, used clever error correction and the powerful 127-qubit processors of IBM that allowed them to tackle a variation of Simon’s problem, demonstrating that quantum machines are now breaking free from classical limitations.

How Quantum Computing Overcomes Classical Limits and Noise

For decades, classical computing has been the norm. However, in recent years, quantum computing has undergone significant development.

An emerging area of computer science, quantum computing utilizes the principles of quantum theory (which explains the nature and behavior of matter and energy at the atomic and subatomic levels) to dramatically increase computation speeds.

Using quantum physics, quantum computing aims to solve problems that are too complex for the classical computers that we use on a daily basis. In fact, quantum computing can solve certain complex simulation problems that would even take a traditional supercomputer hundreds of thousands of years.

Achieving a genuine algorithmic advantage over classical computers is one of the key goals of quantum computing in order to enable future breakthroughs in chemistry, cryptography, optimization, and other fields.

This, however, requires specialized quantum computing hardware and algorithms that take advantage of quantum properties like superposition and entanglement. Also, noise is a major problem for quantum computers.

Proving algorithmic advantage over classical computers on today’s imperfect and noisy quantum hardware, furthermore, remains a challenge.

Designers have started to explore new solutions like NISQ machines, but these noisy intermediate-scale quantum (NISQ) devices are functional on a relatively small scale of several hundred qubits.

Also, they are prone to experience degraded performance because of decoherence (the loss of quantum coherence, which involves a loss of information of a system to its environment) and control errors.

So, a focus is on speeding up algorithmic quantum on these devices, which is simply a scaling advantage. While several of these kinds of demonstrations have been made, the complexity of the problems chosen in them relied on either the difficulty of a restricted set of classical algorithms or computational complexity conjectures.

Recently, an algorithmic quantum speedup not relying on unproven assumptions was shown in the oracle model. This was shown for a Bernstein-Vazirani algorithm, which was observed when put on an IBM Quantum processor with unwanted noise eliminated through dynamical decoupling (DD), a common error suppression technique for NISQ devices.

Now, the research team from the University of Southern California is tackling the issue of noise by implementing a variation of Simon’s problem. This is a well-known example where, in theory, quantum algorithms can solve a task exponentially faster than their classical counterparts, unconditionally.

Simon’s problem is a predecessor to Shor’s algorithm, which can be used to launch the field of quantum computing.

It is also among the original problems to have an exponential quantum speedup proven, though in the Oracle model. The problem requires exponential time to solve on a classical computer, but on a noiseless quantum computer, it only takes linear time, assuming Oracle queries are counted, but we don’t account for the resources that are spent on executing it.

In this problem, the Abelian hidden subgroup involves the identity and a secret string b with the goal being determining b, so basically finding a hidden repeating pattern in a mathematical function.

In simpler terms, it’s like a guessing game, where the players try to guess a secret number, which is not known to anyone but the game host, aka the “oracle.”

The sacred number is revealed once a player guesses two numbers for which the answers given by the oracle are identical, and that player wins. Compared to classical players, quantum players can win this game exponentially faster.

Achieving Unconditional Quantum Speedup

In order to truly discover new materials, break codes, and design new medicines with the help of quantum computers by speeding up computation, they need to be functional.

But as we noted above, noise or errors get in the way. Errors that are produced during computations on a quantum machine end up making quantum computers even less powerful than classical computers. That was until now.

Lidar from USC has been working on quantum error correction and has shown a quantum exponential scaling advantage over the cloud.

This was detailed in the paper, ‘Demonstration of Algorithmic Quantum Speedup for an Abelian Hidden Subgroup Problem,’ in which Lidar worked with collaborators from USC and Johns Hopkins.

“There have previously been demonstrations of more modest types of speedups like a polynomial speedup. But an exponential speedup is the most dramatic type of speed up that we expect to see from quantum computers.”

– Lidar

The main breakthrough for quantum computing, according to Lidar, is demonstrating that we can actually execute entire algorithms with a scaling speedup relative to our general computers. But as he clarified, that doesn’t mean you can do things 100x faster.

But what scaling speedup means is that “as you increase a problem’s size by including more variables, the gap between the quantum and the classical performance keeps growing. And an exponential speedup means that the performance gap roughly doubles for every additional variable,” explained Lidar.

He then stated that the speedup the team has shown is “unconditional.” Now, what this means is that the speedup doesn’t depend on any unproven assumptions.

Previous speedup claims needed the assumption that there is no better classical algorithm to benchmark the quantum algorithm against.

The team here used an algorithm that they modified for the quantum computer to solve a variation of “Simon’s problem.”

Now, to achieve the exponential speedup, “the key is squeezing every ounce of performance from the hardware: shorter circuits, smarter pulse sequences, and statistical error mitigation,” noted first author Phattharaporn Singkanipa, who’s a USC doctoral researcher.

The team achieved this in four different ways. The researchers first limited the data input by restricting the number of secret numbers allowed. Technically, this is done by limiting the number of 1’s in the binary representation of the set of secret numbers. This led to fewer quantum logic operations than otherwise needed, in turn, reducing the chances for error buildup.

Then they compressed the required quantum logic operations through transpilation, a process of rewriting a given input to match the topology of a particular quantum device.

Next, a method called “dynamical decoupling” was applied and had the most impact on researchers’ ability to demonstrate a quantum speedup. What this method involves is applying sequences of carefully designed pulses in order to separate a qubit’s behavior from its noisy environment and keep the quantum processing on course.

At last, the researchers applied measurement error mitigation (MEM) to find and correct certain errors. The point of this step is to rectify errors that were left by dynamical decoupling because of imperfections in measuring the qubits’ state at the end of the algorithm.

Paving the Way to Quantum Utility

With quantum computing offering significant advantages in fields like logistics, materials science, financial modeling, AI, and cybersecurity by leveraging quantum mechanical phenomena to solve complex problems, the market is seeing significant contributions and growth.

The community has also begun to show how quantum processors can outperform their classical counterparts in targeted tasks.

“Our result shows that already today’s quantum computers firmly lie on the side of a scaling quantum advantage.” said Lidar, who’s also a professor of Chemistry and Physics at the USC Dornsife College of Letters, Arts and Science and the co-founder of Quantum Elements, a company paving the way to quantum utility at scale and connecting users with quantum computers.

A couple of months ago, the Quantum Elements team reported2 achieving a breakthrough. Their novel technique, logical dynamical decoupling, tackles logical errors, a constant challenge in quantum computing.

The team demonstrated how this particular pathway prevents errors that traditional error correction codes can’t address, all the while keeping a limited qubit footprint.

They combined error correction with logical dynamical decoupling, which allowed the team to improve the fidelity of entangled logical qubits significantly, bringing practical quantum applications all that much closer to becoming reality.

With the latest research, meanwhile, Lidar said, “the quantum performance advantage is becoming increasingly difficult to dispute,” as the performance separation cannot be reversed because the demonstrated exponential speedup is “unconditional.”

The study shows an unequivocal algorithmic quantum speedup for a restricted Hamming-weight (HW) version of the problem using two different IBM Quantum processors. The researchers found an enhanced quantum speedup when the computation is protected by DD. The use of MEM enhanced the scaling advantage further.

MEM and dynamical coupling were used for error suppression and modified to adapt the problem for real quantum devices. They helped maintain quantum coherence and improve accuracy despite hardware limitations.

With their experiments, the researchers have brought NISQ algorithms all that much closer to a demonstration of a quantum speedup through Shor’s algorithm and highlighted the key role quantum error suppression techniques play in such a demonstration.

Demonstrating an exponential speedup in solving the problem on actual quantum hardware, according to the researchers, is “an important milestone for the field.” Besides bridging the gap between theory and practice, their results also emphasize the growing capabilities of current quantum processors. The study noted:

“As hardware continues to improve, our approach paves the way for even more powerful demonstrations of quantum advantage in the near future.”

Despite all this, there aren’t any practical applications of the technology beyond winning guessing games. This has actually been true for other advancements in the field as well.

“We need a ChatGPT moment for quantum,” Francesco Ricciuti, an associate at VC firm Runa Capital, had told CNBC back in Dec. when Google unveiled the new chip that it said marks a major breakthrough in quantum computing.

Google’s quantum chip is called Willow, which has 105 qubits and can reportedly reduce errors “exponentially” as the number of qubits is scaled up. This “cracks a key challenge in quantum error correction that the field has pursued for almost 30 years,” said Hartmut Neven, founder of Google Quantum AI.

Willow performed a computation that would take today’s fastest supercomputers 10 septillion years, in less than five minutes.

“They are trying to define a really high problem for normal computers that they can solve with quantum computers. It is amazing they can do that, but it doesn’t really mean it is useful,” Ricciuti said at the time.

Even Google said that its RCS benchmark has “no known real-world applications” and the “scientifically interesting simulations of quantum systems,” that they have done and led to new scientific discoveries are also “still within the reach of classical computers.”

The tech giant, however, is working on stepping into the realm of algorithms that are not only beyond the reach of classical computers but are also “useful for real-world, commercially relevant problems.”

Earlier this year, Julian Kelly, director of hardware at Google Quantum AI, said that we may be about “five years out from a real breakout, kind of practical application that you can only solve on a quantum computer.”

Nvidia CEO Jensen Huang also believes quantum computing can “deliver extraordinary impact,” but noted that the tech is “insanely complicated.”

According to Lidar, “much more work remains to be done before quantum computers can be claimed to have solved a practical real-world problem.” And that would require speedups that do not depend on oracles that know the answer in advance. Moreover, we would have to make significant advances in methods to reduce decoherence and noise further.

Still, by demonstrating exponential speedups, which was previously just an “on-paper promise” of quantum computers, researchers have achieved a major milestone, which is worth celebrating.

Investing in Quantum Technology

With quantum computers marking a major leap forward in computing capability, numerous labs, universities, companies, and government agencies around the world are developing quantum computing technology.

So, when it comes to investing opportunities, we have Amazon (AMZN ), Intel (INTC ), and Microsoft (MSFT ) among others actively exploring the space. But today, we’ll take a look at the investing potential of IBM (IBM ), a pioneer in quantum hardware.

International Business Machines Corporation (IBM )

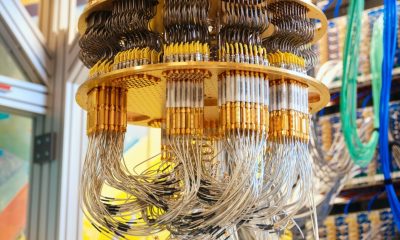

IBM’s 127-qubit processors were used in the USC experiment itself. It was late in Nov. 2021 that IBM first unveiled this processor, dubbed Eagle, which followed its 65-qubit ‘Hummingbird’ processor that was launched in 2020 and the 27-qubit ‘Falcon’ processor a year prior to that.

USC is actually an IBM Quantum Innovation Center, while Quantum Elements is a startup in the IBM Quantum Network.

For focused efforts in the field, the company has a dedicated platform, IBM Quantum, that aims to build the first large-scale fault-tolerant quantum computer. The tech giant aims to deliver a system that accurately runs 100 million gates on 200 logical qubits by 2029. With this system, IBM will be “unlocking the first viable path to realizing the full power of quantum computing.”

IBM is building this quantum computer called “Starling” at its New York campus, and it would support a deep, error-corrected circuit. As per its roadmap, the company is also planning a new IBM Quantum Nighthawk processor to be released later this year.

Last month, it deployed a Quantum System Two at a research center in Japan. And this week, the tech giant participated in startup Qedma’s $26 million funding round with its CEO expecting to demonstrate this year “with confidence that the quantum advantage is here.” Qedma is already available through IBM’s Qiskit Functions Catalog, which makes quantum accessible to end users.

While leading quantum tech, the company is primarily known for its cloud, AI, and consulting expertise, which it provides through the Software, Consulting, and Infrastructure segments.

If we look at IBM’s market performance, the $268.6 billion market cap company’s shares as of writing are trading at $289, up 30.85% YTD. IBM stocks have been having a nice time with prices up 145% in the last three years as it hit fresh highs while the company pitches itself as the provider of next-gen enterprise tech.

It has an EPS (TTM) of 5.85, a P/E (TTM) of 49.81, and an ROE (TTM) of 21.95%. The dividend yield available to shareholders, meanwhile, is an attractive 2.31%.

(IBM )

As for its financial performance, IBM reported a 1% increase in its revenue to $14.5 billion for the first quarter of 2025. Its GAAP gross profit margin was 55.2% and its non-GAAP gross profit margin was 56.6%. Net cash from operating activities was $4.4 billion, while free cash flow was $2 billion.

CEO Arvind Krishna attributed revenue, profitability, and free cash flow surpassing expectations to “strong demand for generative AI,” with IBM remaining “bullish on the long-term growth opportunities for technology and the global economy.”

Latest IBM Stock News and Developments

Conclusion

Demonstrating an algorithmic quantum speedup, one that scales with the size of the problem, is key to establishing the utility of quantum computers. So, the demonstration of an unconditional, exponential speedup marks a watershed moment in quantum computing, proving that today’s devices can break free from classical limits.

This achievement by researchers significantly extends the scope of quantum speedups for oracular algorithms, expands the frontier of empirical quantum advantage results, and points to practically relevant algorithms finally being within reach.

Overall, the journey of quantum computers toward practical, everyday applications is still unfolding, with continued improvements to unlock the full power of quantum technology!

Click here for a list of top quantum computing companies.

Studies Referenced:

1. Singkanipa, P.; Kasatkin, V.; Zhou, Z.; Quiroz, G.; Lidar, D. A. Demonstration of Algorithmic Quantum Speedup for an Abelian Hidden Subgroup Problem. Phys. Rev. X 2025, 15 (2), 021082. https://doi.org/10.1103/PhysRevX.15.021082

2. Vezvaee, A.; Tripathi, V.; Morford-Oberst, M.; Butt, F.; Kasatkin, V.; Lidar, D. A. Demonstration of High-Fidelity Entangled Logical Qubits using Transmons. arXiv 2025, arXiv:2503.14472. https://doi.org/10.48550/arXiv.2503.14472